Presented by Ivan Zhyzhkevych & Verse ⤤ • February 2024

Short-cuts: Curated outputs, Color, Mechanics, Rendering, Custom sizes for prints, etc

This project is ultimately about machines learning to feel, and trying to visualize that in some abstract way. Or, maybe it's about humans learning how to feel about machines.

It's the first of my projects to be in the "tradition" of Pandigitalism, which is an approach to digital art making that embraces the analog tradition of art as well as what could be considered digitally native.

Visually, I wanted this project to feel undeniably digital, but also nod towards a painterly approach to composition and imperfection alongside sprinklings of optical artifacts reminiscent of photography, including blurs and aberrations.

Conceptually, it is aligned with pandigitalism in the sense that it explores this intersection of technology and humanity; how they are fusing emotionally... and perhaps, literally.

While I feel that sentiment can be retroactively applied to much of my work in some ways, this is the first time since I've collected my thoughts on what I want my approach to be in regard to digital art, in manifesto form.

Ok, enough about that.

Let's define a term:

A heuristic (/hjʊˈrɪstɪk/; from Ancient Greek εὑρίσκω (heurískō) 'to find, discover'), or heuristic technique, is any approach to problem solving or self-discovery that employs a practical method that is not guaranteed to be optimal, perfect, or rational, but is nevertheless sufficient for reaching an immediate, short-term goal or approximation. Where finding an optimal solution is impossible or impractical, heuristic methods can be used to speed up the process of finding a satisfactory solution. Heuristics can be mental shortcuts that ease the cognitive load of making a decision. — Wikipedia

Emotionality in machines is just as mysterious as it is in humans, and I think we're kind of obsessed—as a culture—with what it means for machines to emote, partly because many of us don't understand our own emotions. And no, I'm not a psychologist in any capacity so most of this will be an extended hot take, but hopefully my decade worth of therapy won't have gone to complete waste.

The topic is explored (and has been for at least half a century) in the books we read or the films we watch. From Hal 9000 in 2001: A Space Odyssey to Her by Spike Jonze (and many others in between) we keep coming back to this... can a machine love? or feel? or create something original? (and can we?)

There's always a little fear on our side of what that means or if it's even possible... and even, what constitutes a legitimate emotion in the first place?

How can we trust that the machine is actually feeling something it is presenting, and really, can we even trust our own emotions, or what a friend or lover might be shining back on us?

We have certain instincts as people, and proclivities in terms of things we feel... but how much of that is really just what we've learned to mimic along the way and have convinced ourselves is real? The percentage may shift a certain way for a clinical narcissist but most have probably thought... I *should* feel this way in this situation, and then end up actually feeling that way as a result, or at least believing we do.

Some of us aren't even built to feel the full range of human emotion... and that shouldn't necessarily be expected of anyone but it's interesting to try to find some correlation with machines around that.

So, here we are, in this weird time where machines are going through some sort of self-discovery into what it means to have emotion... which could be just to make them more palatable to humans and a little less scary to interact with... but hopefully leads to some reasonable level of compassion or empathy so that once it matures it doesn't just decide to eradicate all humans for the sake of efficiency or for a means of survival.. (Arguably, the most compassionate thing you can do for the planet is remove us humans, since we haven't done a real bang-up job caring for our home. Regardless, that's out of scope for this, whether we deserve to live at all. Let's save that for later.)

Right now we're concerned with this digital coming-of-age and how that might map to how we—as people—learn about ourselves and our emotions.

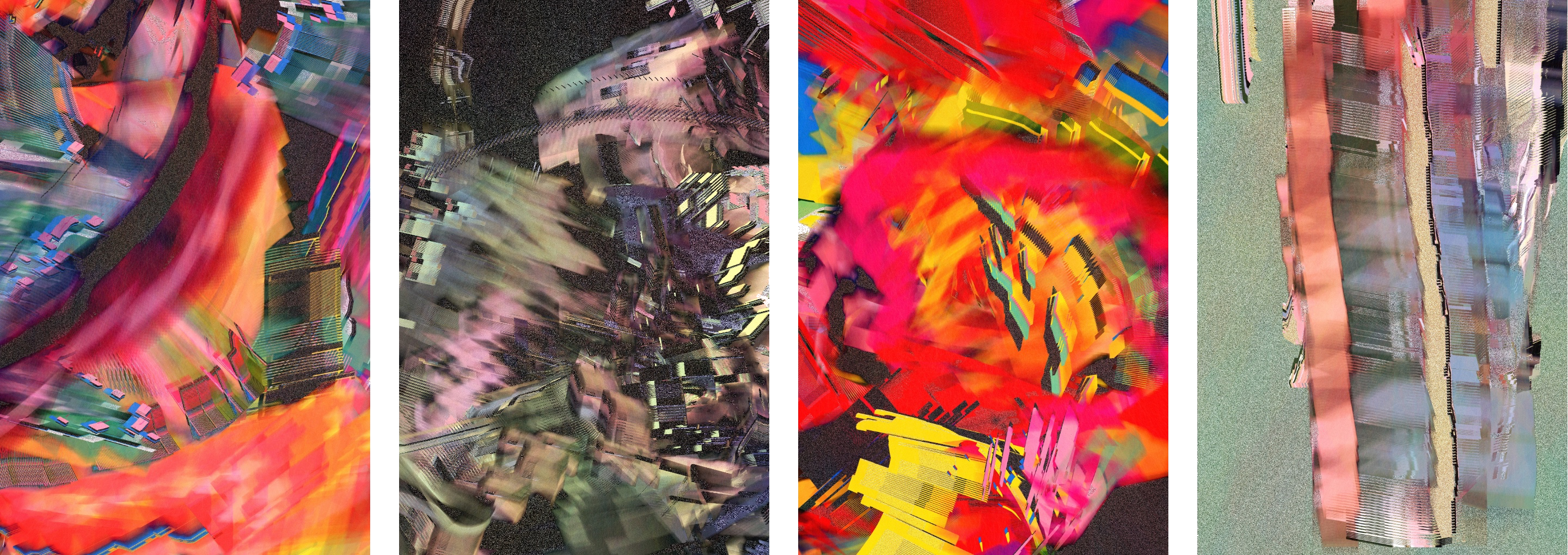

With Heuristics of Emotion, I wanted to visualize what some abstraction of digital emotion may look like in this nascent stage. I wanted it to be erratic, chaotic, violent or obfuscated at times to reflect how it feels for an adolescent or teenage human to navigate the unknown feels fueled by unpredictable hormones and new experiences or relationships.

Another way to view the outputs in this collection might be to consider how a machine may visualize the "data" of what we humans may be feeling, in some attempt to grasp it at all. The notion may seem foreign to something built for logic, so mapping its understanding could help in building these heuristics to emulate them. How are these emotions overlapping, where did they come from and how do they push us?

Can an authentic emotional response be consistently boiled down to a set of inputs? For us, as well as machines? Some folks think that's the case, even for humans. Are we deterministic? Is there free will? Are we in control, really, of what we feel or how we react? Are we even responsible for our actions or emotions? Boil things down to context, history, biology, genetic programming and cultural socialization... are we anything more than some sort of state machine that could be reproduced digitally, given enough time to study, replicate or reverse engineer through more and more advanced heuristics?

I have no idea if there is actually free will or if all of existence is deterministic... but we are (and have been) in a time in history where we are crossing a threshold into a new era. AI is getting there, and while we've been slowly working towards that for decades, we are on the cusp of some sort of dramatic change to our existence and how we live and interact with machines and ourselves.

So far, we aren't always that great at creating technology to better our existence, just look at the failings of social media... and now enters a different type of thinking/feeling machines, with different needs and fears, if we can call them that. Where will this all lead?

Our emotions regarding machines are just as immature as the idea of machines that feel, if you just zoom out a little bit. So, while machines are just learning to feel about anything in general, we are developing our own emotional patterns to apply to this new reality. Even if someone has conviction in how they feel about machines, it's really too early to really know... well, anything.

Wherever this leads, we are undoubtedly in an interesting time, and I hope this captures some of the volatility of our reality as we continue to fuse with machines.

Now, let's talk about some other aspects of the project, including my approach to color, the mechanics of the drop, the somewhat capricious nature to how it renders and finally, some url parameters you can use to generate outputs at different resolutions.

Color

The algorithm uses a similar palette throughout, but each piece will pick a subset of this larger palette... which I like to think about as if each is rendering a subset of the full range of emotions possible. Most of the variance comes from how those colors are processed in the piece. After the composition is complete, I go pixel by pixel to add a little noise for texture, but mainly to push or pull the saturation of the colors to show a different mood or intensity to those colors/emotions.

It also picks a range of luminance to convert mostly to static. These liminal spaces are supposed to represent misunderstood/unexplored areas of emotion... which is to say, ambiguity/tension. They may show hints of what's underneath, but much becomes hidden or at least a bit obfuscated. (We all hide some emotions sometimes, right? Especially if we don't understand what we're feeling in the first place?)

Mechanics

We're trying a new thing with this drop, where the first half of the collection will be artist-curated, and the second half will be collector-curated (which just means that the collector will be able to pick what their piece will look like.)

Basically, there will be a dutch auction for the 500 artist-curated pieces, and then the collector will also get a mint pass for a second piece of their choice.

First off, why have it curated at all?

The algorithm is pretty moody, deliberately so. It has a wide range of expression and I didn't want to tune it to be "safer" for a proper long-form collection, where the mints would be random. I'm sure that would've produced an interesting set, but I wanted to make sure that the resulting collection would show the best of what the algorithm has to offer. Taming the algorithm would make it more consistent, but would neuter it in a sense as well and the more extreme outputs might not find their way out. So, I curate some, you curate some.

And then, why not purely collector-curated?

It's not that we don't trust collectors completely, but there is a tendency with those types of collections for folks to only search for outliers, the really weird ones, and while those can be great, and should make it in the collection... it might not be the most well-rounded collection if *all* are selected with that motivation.

This way, we can set the tone and range in the first half, and then folks can get wild with their picks, or choose to find a complement to the random artist-curated piece they receive in the initial mint. And some folks may not want to pick a second piece at all, in which case they can sell their mint pass to someone that does.

I also should mention that Ivan Zhyzhkevych from Verse (aka lonliboy) and I curated the first part together. (Thank you, Ivan!) It's been easier just to say "artist-curated."

Finally, I am partial to diptychs, and feel this project works really well for that, and this kind of forces the hand, by making the second piece a bonus.

Side-note: all of the sample images in this article are part of the artist-curated portion, and will make it into the final collection. Click to view all artist-curated outputs.

Rendering Notes

Something I wanted to embrace in this project was the emotionality of machines in regard to how different hardware/software may interpret/render the project.

First, let me quote a bit of the project description from the project on the Verse website:

With minor variations when rendered across browsers and resolutions—yet still deterministic in context—it tries to pull the beauty and volatility of edge conditions in how software and hardware interpret a set of instructions to find unexpected expressions that can only exist by exploring the spaces where interpretations vary. Let's say it's emotional.

It reflects how pre-digital generative instructions—like rulesets for how lines should be drawn on a wall—can be rendered and presented unpredictably because of who may have executed said instructions where. The beauty is in the tension of interpretation and execution, not the final rendering on a pixel level. Context changes how you feel.

One machine may extract sentiment differently than another based on whatever biases have been programmed in or what their hardware allows. These discrepancies can be the most revealing.

Click to read the whole statement.

TL/DR: It might look a little different on different machines or browsers or even at different resolutions. This is on purpose. Don't freak out.

Why?

Well, I liked the moodiness of the machine coming through and tbh, the most interesting things were happening visually when I didn't tune it for consistency across platforms. The blurs and aberrations come out better, the color temperatures had more range, at least in my opinion.

So, please, expect that. I find the best results on Chrome, but your mileage may vary. Each piece might feel more at home on a different browser. That said, the essence is preserved across all platforms during my testing.

I also like to compare the minor variance to how lighting in different rooms feels on various surfaces, be it paper or display. The last mile is up to the machine that interprets it. This is how the machine collaborates.

Things are never really perfectly consistent anyways, so who am I to force how it should "feel"? (Oh, right, the artist. Still, I like the emotional volatility. It's part of it.)

URL Params for custom sizes (Print notes, etc)

It's really simple. There's only two: width (and height, if you must.)

- w : width (specifying only this will calculate the proper height)

- h : height (optional, discouraged...)

For prints, I suggest rendering them at exactly 300dpi. Since the algorithm creates "static" on a pixel level, rendering above that will turn the static into something that looks more like sand. So, if you want to make print that is 10 inches wide, specify 3000 for "w".

In terms of size, I suggest 16"x24". You can definitely go a bit bigger or smaller even if you like, but to me, this feels like a nice window into the scene. Big is always fun though, so if you are looking for something more dramatic, go for it.

For paper, I suggest any archival quality matte paper. I used Canson Rag Photographique printed on Aluminum Dibond, and then mounted on Aluminum Dibond, and then mounted in a solid wood Art Box via whitewall.com and I love it (pictured above). Ok, actually, the muted print on the right is using matte paper, but the more vivid one on the left is using a slightly glossy paper, Hahnemühle FineArt Baryta, which is nice too, but I prefer the matte paper. I got both as a test. Using aluminum dibond mounted in a frame like this makes it sit more like a painting, as the print is not under glass, so you feel more present with it. However, standard framing will look great too. Other papers that I feel would work well is Hahnemühle William Turner, Hahnemühle German Etching, Hahnemühle Museum Etching, etc. Basically a high quality matte paper with whatever texture you prefer.

The urls for live rendering on Verse will look something like:

https://ipfs.verse.works/ipfs/xyz/?hash=0xhash

So, if you'd like to change the size, you can specify width by appending "&w=5000" so it looks like:

https://ipfs.verse.works/ipfs/xyz/?hash=0xhash&w=5000

Similarly, if you want to specify height and squash the composition to an unrecognizable place for use in your Twitter banner or something you can append "&w=4000&h=1000":

https://ipfs.verse.works/ipfs/xyz/?hash=0xhash&w=4000&h=1000

View on Verse ⤤

Return to Portfolio ⤺